The sixties were the very beginning of computer networks. This article describes the history of computer networks in its early years, Networking History in 1960.

The quality and reliability of the PSTN increased significantly in 1962 with the introduction of pulse code modulation (PCM), which converted analog voice signals into digital sequences of bits. DS0 (Digital Signal Zero) became the basic 64-Kbps channel, and the entire hierarchy of the digital telephone system was soon built on this foundation.

Next, a device called the channel bank was introduced. It took 24 separate DS0 channels and combined them using time-division multiplexing (TDM) into a single 1.544-Mbps channel called DS1 or T1. (In Europe, 30 DS0 channels were combined to make E1.) When the backbone of the Bell system became digital, transmission characteristics improved due to higher quality and less noise.

This was eventually extended all the way to local loop subscribers using ISDN. The first commercial touch-tone phone was also introduced in 1962.

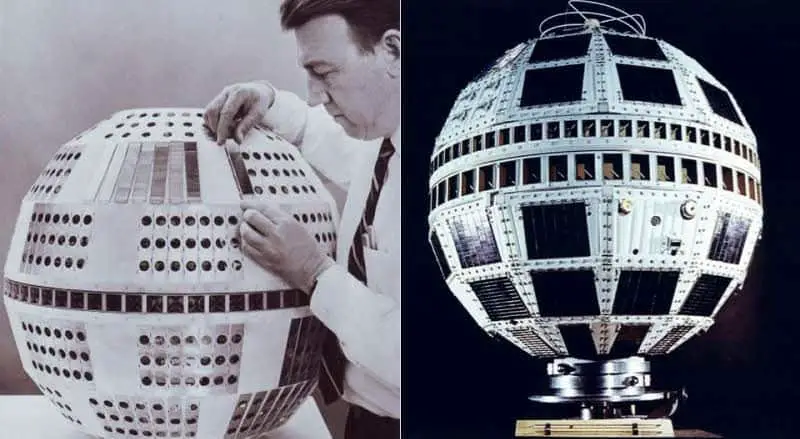

Telstar, the first communication satellite

The first communication satellite, Telstar, was launched in 1962. This technology did not immediately affect the networking world because of the latency of satellite links compared to undersea cable communications, but it eventually surpassed transoceanic underwater telephone cables (which were first deployed in 1965 and could carry 130 simultaneous conversations) in carrying capacity. In fact, in 1960 scientists at Bell Laboratories transmitted a communication signal coast to coast across the United States by bouncing it off the moon. Unfortunately, the moon wouldn’t sit still! By 1965, the first commercial communication satellites (such as Early Bird) were deployed.

TELPAK, from Bell Systems

Interestingly, in 1961 the Bell system proposed a new telecommunications service called TELPAK, which it claimed would lead to an “electronic highway” for communication, but it never pursued the idea. Could this have been a portent of the “information superhighway” of the 1990s?

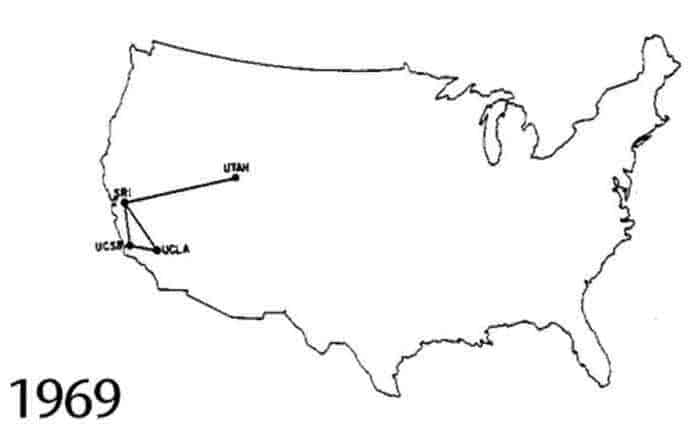

The year 1969 brought an event whose full significance was not realized until more than two decades later: the development of the ARPANET packet-switching network. ARPANET was a project of the U.S. Department of Defense’s Advanced Research Projects Agency (ARPA), which became DARPA in 1972. Similar efforts were underway in France and the United Kingdom, but the U.S. project evolved into the present-day Internet.

(France’s MINTEL packet-switching system, which was based on the X.25 protocol and which aimed to bring data networking into every home, did take off in 1984 when the French government started giving away MINTEL terminals; by the early 1990s, more than 20 percent of the country’s population was using it.)

The original ARPANET connected computers at Stanford, the University of California at Los Angeles (UCLA), the University of California at Santa Barbara (UCSB), and the University of Utah, with the first node installed at UCLA’s Network Measurements Center. A year later, Harvard, the Massachusetts Institute of Technology (MIT), and a few other institutions were added, but few of those involved realized that this technical experiment would someday profoundly affect business and society.

ARPANET – The beginning

1969 also saw the publication of the first RFC document. The informal RFC process evolved into the primary means of directing the evolution of the Internet. The first RFC document specified the Network Control Protocol (NCP), which became the first transport protocol of ARPANET.

UNIX system

That same year, Bell Laboratories developed the UNIX operating system, a multitasking, multi-user NOS that became popular in academic computing environments in the 1970s. A typical UNIX system in 1974 was a PDP-11 minicomputer with dumb terminals attached. In a configuration with 768 KB of magnetic core memory and a couple of 200-MB hard disks, the cost of such a system would have been around $40,000.

EBCDIC and ASCII

Standards for computer networking also evolved during the 1960s. In 1962, IBM introduced the first 8-bit character encoding system, called Extended Binary-Coded Decimal Interchange Code (EBCDIC). A year later, the competing American Standard Code for Information Interchange (ASCII) was introduced. ASCII ultimately won out over EBCDIC even though EBCDIC was 8-bit while ASCII was only 7-bit.

ASCII was formally standardized by the American National Standards Institute (ANSI) in 1968. ASCII was first used in serial transmission between mainframe hosts and dumb terminals in mainframe computing environments, but it was eventually extended to all areas of computer and networking technologies.

Other early developments

Other developments in the 1960s included the release in 1964 of IBM’s powerful System/360 mainframe computing environment, which was widely implemented in government, university, and corporate computing centers. In 1966, IBM introduced the first disk storage system, which employed 50 two-foot-wide metal platters and had a storage capacity of 5 MB. IBM created the first floppy disk in 1967. In 1969, Intel released a RAM chip that stored 1 KB of information, which at the time was an amazing feat of engineering.