Definition of Quality of Service (QoS) in Network Encyclopedia.

What is Quality of Service (QoS)?

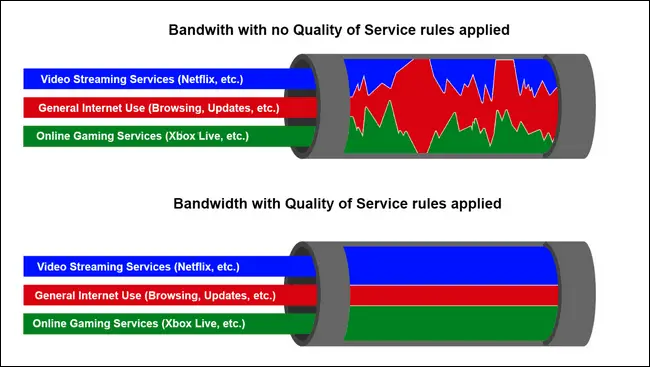

Generally, QoS is any networking technology that has predictable latency and data loss. More specifically, any mechanism that allows either absolute or relative performance requirements to be defined for different traffic streams carried over a network. In other words, a quality of service (QoS) network can guarantee a certain level of throughput for a specific path, connection, or type of traffic.

This makes it possible to ensure that critical network applications receive priority handling.

How It Works

Networks that support QoS mechanisms can generally control both the quality of a network transmission and the availability of bandwidth to ensure this quality. These are different from ordinary networks, which guarantee only a best-effort delivery – traffic flow cannot be controlled and bandwidth cannot be reserved. If traffic congestion occurs during a period of intense network communication, QoS features can kick in to ensure that certain data streams receive preference for users and applications that need consistent data flow. For example, networks carrying real-time audio or video require a high level of QoS to ensure that reception is smooth and free of errors. (Latency in delivery of packets for a real-time multimedia stream can produce pauses and dropouts that are highly undesirable from the user’s point of view.)

You can control the following network properties in a network that supports QoS functions:

- Throughput (total bandwidth used)

- Latency (traffic delay)

- Priority (among types of traffic)

- Peak traffic, burstiness, and jitter (to smooth traffic flow)

- Packet or cell loss and retransmission

Asynchronous Transfer Mode (ATM) is one networking technology that can deliver data at specific levels of QoS. A look at the QoS features in ATM will give you an idea of the scope of issues that QoS can address. The QoS mechanisms of ATM function in the following areas:

- Traffic contracts: Specify QoS parameters for a data stream, such as peak and average bandwidth and maximum burst size. Each ATM end node in an ATM network negotiates a traffic contract with that network. QoS parameters can be defined for the end node to control the type and amount of traffic that the host can send over an ATM circuit. If an ATM switch in the circuit cannot meet these requirements, it sends a message to the transmitting host indicating that the connection is refused. Each virtual channel or virtual path that is established by an ATM end node in an ATM network has its own traffic contract.

- Traffic shaping: Involves ATM devices using data queues to smooth out traffic bursts and limit the peak data rate so that the conditions of the negotiated traffic contracts are met.

- Traffic policing: ATM switches enforce traffic contracts so that the agreed-upon QoS conditions of each data stream are met. If these conditions are violated, ATM switches set the cell-loss priority (CLP) bit of each offending cell, which indicates that the offending cells might be dropped when congestion occurs at the switch.

The specific QoS parameters that can be negotiated in an ATM network are called traffic parameters. They include the following:

- Cell Delay Variation (CDV): The tolerance per second for cells that should not exceed the peak cell rate. CDV is specified at the network, not at the end station. Cells within the CDV are accepted.

- Maximum Burst Size (MBS): The maximum number of cells per burst.

- Peak Cell Rate (PCR): The maximum number of cells that can be transmitted per second based on the specified peak bandwidth per second.

- Sustainable Cell Rate (SCR): The maximum number of cells that can be transmitted per second based on the specified sustainable bandwidth per second.

The implementation of QoS in ATM allows ATM networks to support four classes of QoS, each with scalable levels:

- Constant Bit Rate (CBR): The traffic requires a guaranteed rate of transport and does not tolerate cell loss. The ATM end station informs the ATM network of the required QoS parameters at call setup. The network then performs admission control by reserving the necessary bandwidth or refusing the connection. The end station is responsible for complying with the agreed-upon peak data rate – if it exceeds this rate, the network drops the offending cells.

- Variable Bit Rate (VBR): Similar to CBR except that it negotiates a maximum burst size and maximum sustainable rate in addition to a peak data rate.

- Unspecified Bit Rate (UBR): Does not involve reserving bandwidth or establishing cell-loss ratios. UBR is commonly used in LAN emulation (IP-over-ATM networks that are typically used in campus backbones). If one cell is dropped because of congestion, the entire Internet Protocol (IP) packet to which the cell belongs must be retransmitted.

- Available Bit Rate (ABR): Uses periodic polling of the ATM network to adjust the data transmission rate. ABR is also used in LAN emulation (LANE) implementations.

NOTE

The QoS features of ATM give it an advantage over competing technologies such as Fast Ethernet and frame relay, but ATM is more difficult to implement because of its more complex architecture. However, you can use ATM backbone technology to backfill QoS features into connected Fast Ethernet and frame relay network structures, albeit awkwardly. Gigabit Ethernet also includes QoS features.

In best-effort TCP/IP internetworks such as the Internet, QoS in IP data streams is difficult to achieve. The Internet Engineering Task Force (IETF) has proposed two new mechanisms for providing QoS for the Internet and for TCP/IP internetworks: integrated services and differentiated services.

Integrated services focuses on making it reasonable to use TCP/IP internetworks for a variety of services, such as audio, video, real-time data, and classical data traffic. It regulates the flow of data based on computations made by the router. It also makes use of the Resource Reservation Protocol (RSVP), which can be used to reserve circuits to maintain an even flow of data. Controlled-load service, another aspect of integrated services, ensures that priority data keeps moving even when the router reaches its capacity. Because integrated services requires new routers, it would require considerable rebuilding of the core technology of the Internet.

Differentiated services aggregates IP traffic into three classes of priority serviced by routers. This would enable ISPs to offer varying levels of priority to their customers. Differentiated services uses the DS field in the IPv4 packet header to define how the packet will be forwarded (the “per-hop behaviors” of IP traffic). This solution would mean minor changes to the Internet’s backbone routers and would leave the complexity of implementing QoS features to the edges of the network, especially the signaling methods for establishing QoS-enabled links between nodes on the network.